Billing and coding organizations invest heavily in clean claim rates.

Quality checks, claim scrubbers, staff training, coding certification, all designed to maximize the percentage of claims that pass through without rejection or denial on first submission. And yet, according to the 2025 State of Claims report from Experian Health, 68% of providers say submitting clean claims is more challenging today than it was a year ago. That number has moved in the wrong direction despite significant investment across the industry.

The explanation that most organizations reach for is increased payer scrutiny: more rigorous medical necessity reviews, expanding prior authorization requirements, AI-driven denial algorithms that are faster and more exacting than human reviewers. Those are real factors. They are also downstream variables that billing firms cannot control.

What billing firms can control is the quality of the data that underlies every claim they submit. And the evidence is clear that a significant share of clean claim failures are provider data failures, not coding failures.

The Data Behind the Rate

Experian Health’s 2025 analysis identifies missing or inaccurate data as the cause of 50% of all claim denials. Broken into components, incorrect or missing provider information– NPI, taxonomy, rendering vs. billing provider distinctions, network status, credentialing validity, is among the most consistently cited drivers of first-submission rejection.

Aptarro’s 2025/2026 benchmarking data shows that practices with poor billing automation and outdated provider data see denial rates of 15 to 20%, compared to a benchmark of 5 to 7% for well-managed organizations. That 10 to 15 percentage point spread represents the addressable gap, the claims that would pass on first submission if the underlying provider data were accurate.

For a billing organization submitting 10,000 claims per month, the difference between a 7% denial rate and a 15% denial rate is 800 additional denied claims every month. At an average rework cost of $47.77 (Medicare Advantage) to $63.76 (commercial), that gap represents $38,000 to $51,000 in monthly rework cost, before accounting for the revenue on claims that are never resubmitted.

Dastify Solutions, which attributes its 98.5% clean-claim rate specifically to front-end error prevention, illustrates what is achievable when provider data quality is treated as a prerequisite for submission rather than an afterthought. That performance benchmark matters not just as an aspiration but as evidence that the gap between current industry denial rates and what is achievable is largely a provider data problem — not a coding problem.

Why Clean Claim Technology Has Limits Without Clean Data

The industry has invested heavily in claim scrubbing technology, and those investments have delivered real value. Claim scrubbers catch formatting errors, incomplete fields, and coding inconsistencies before claims reach payers. They are effective at what they are designed to do.

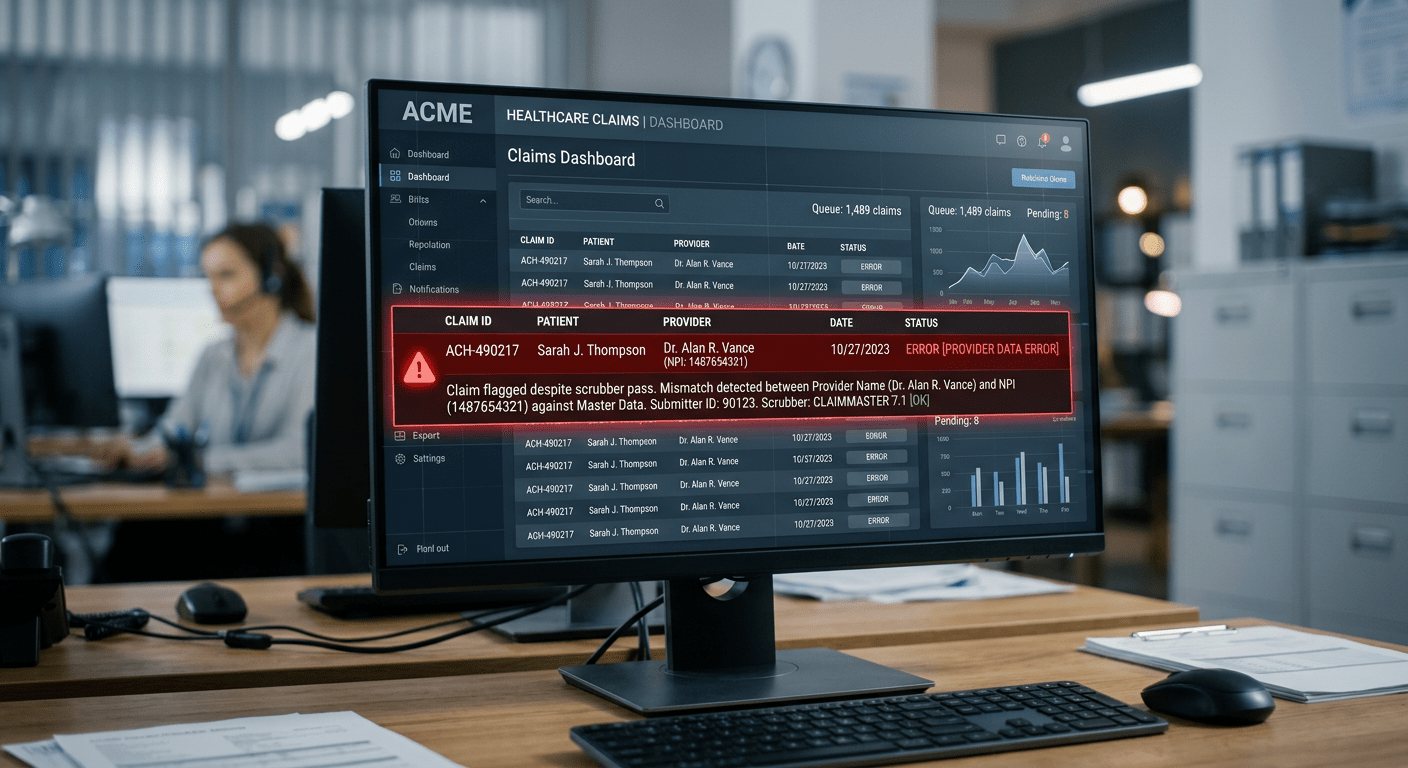

What they cannot do is validate whether the provider data feeding those claims is currently accurate. A claim scrubber will confirm that an NPI is correctly formatted and that a taxonomy code is valid. It will not confirm that the NPI belongs to the rendering provider identified on the claim, that the provider is currently enrolled with the payer, or that their credentialing status is active as of the date of service. Those validations require infrastructure that operates at the provider identity level continuously, not just at the point of claim submission.

This is the gap that clean claim technology cannot close on its own. It is also the gap that explains why 68% of providers report clean claim submission becoming harder despite continued technology investment: the tool is working correctly, but the data it is working from has drifted. As we have traced across the last few articles, from entry points in The $125B Problem to per-claim costs in What a Denied Claim Actually Costs to staff burden in Inside the Staffing Crisis, the root of that drift is consistently provider data that was not maintained at the infrastructure level.

For submission staff: When a claim scrubber clears a claim and it still gets denied for a provider data issue, that is the gap made visible. The claim looked clean at submission because the data looked clean, but the underlying provider record was stale. Building a log of those denials, organized by provider and denial reason, gives leadership the evidence they need to address the source.

Do You Want to See What Provider Data Accuracy Can Do for Your Bottom Line?

If any of this resonates with what your team is dealing with, the next step is a conversation. Schedule a Strategy Session. No sales pitch, just a working discussion about your current data posture and the revenue you’re leaving on the table.

We invite you to learn more through third-party and Datagence resources:

- Datagence: The Provider Enforcement Reckoning

Opt-in to the Datagence Trust Center to receive alerts about new content. No nurture emails. No sales calls. Just information as its released.