If your organization has spent the last several years working on a provider data project (building internal pipelines, layering on vendors, or trying to designate a system of record), you are not alone. Most health plans have. And most of them still have the same problem they started with.

Not because their teams weren’t capable. Not because the intent wasn’t right. But because the approach was treating a continuous, infrastructure-level challenge as a solvable IT project.

Our last few articles have traced the full arc of what bad provider data actually costs:

- The hidden tax on the revenue cycle – The Hidden Tax on Your Revenue Cycle: What Provider Data Errors Actually Cost

- The way it silently undermines auto-adjudication – Auto-Adjudication Is a Provider Data Problem in Disguise

- How it erodes Stars ratings and quality bonus eligibility – The Stars Are Watching: How Provider Data Accuracy Flows Through to MA Quality Ratings and Revenue; and

- Why the $21 billion automation opportunity keeps slipping because the data foundation isn’t ready to support it – Why the $21B Automation Opportunity Starts with Provider Data – Not Technology

What we haven’t addressed directly is the uncomfortable pattern that keeps most organizations from solving the problem at all: they keep trying to project their way out of an infrastructure problem.

The Internal Build Trap

The instinct to build internally is understandable. Your team knows the data. They understand your systems. They can build something customized to your workflows and your payer-specific requirements.

The problem is the timeline.

The Datagence team was recently made aware of an instructive example provided by a large healthcare organization. In this case, a significant capital investment over a two-year period was made to clean up to address provider data for a single state in their network. That timeline is not an anomaly. It reflects the genuine complexity of navigating internal politics, designing a governance architecture, then building ingestion pipelines, entity resolution logic, validation rules, audit trail architecture, and compliance workflows from the ground up; all while simultaneously maintaining existing operations and meeting regulatory deadlines that don’t pause for build cycles.

CMS-4208-F2 is live, making Medicare Advantage provider directories publicly visible in the Medicare Plan Finder. The REAL Health Providers Act is advancing in Congress, with a 90-day verification mandate that would apply broadly across the industry. Organizations that began internal builds in 2022 are still building while enforcement timelines are running.

The regulatory clock doesn’t have a “project in progress” exception.

The Vendor Stack Trap

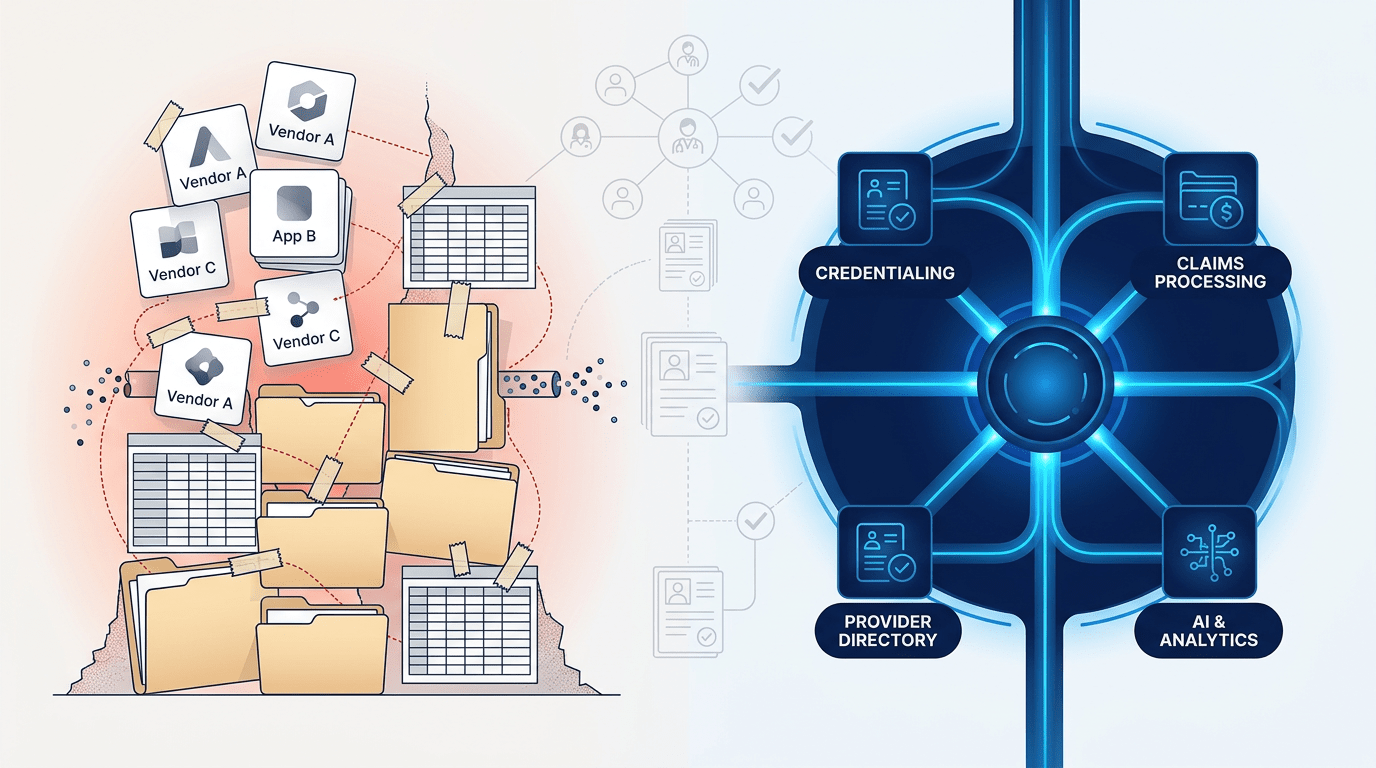

For organizations that decided not to build, the typical alternative has been to assemble. A directory vendor here. A credentialing system there. An NPI lookup tool. Perhaps an ETL layer to move data between them.

The result is a patchwork that addresses fragments of the problem without addressing the problem itself.

The core issue, as this series has established, is not that organizations lack data tools. It is that the same provider appears differently across a dozen systems, with no unified identity, no continuous validation mechanism, and no authoritative source of record that every downstream process can trust.

A directory vendor fixes the directory output. It doesn’t fix the credentialing record that fed it, or the claims system that contradicts both. A credentialing platform manages licenses and enrollment at a point in time. It doesn’t continuously reconcile the roster file submitted three months ago against what the NPI registry says today.

Each tool solves a fragment. None of them solves the underlying structural gap: fragmented identity, episodic validation, and no single source of truth that all systems consume.

The Experian Health 2025 State of Claims survey found denial rates are still rising, despite years of investment in claims and data quality tooling. That is the vendor stack trap made visible in the numbers.

The Periodic Cleanup Trap

There is a third pattern that deserves its own examination, because it can look like a solution while the problem keeps compounding underneath it.

Annual provider data cleanup exercises. Quarterly roster verification projects. Periodic audits that produce a report, generate remediation tasks, and close with an updated roster that is already drifting by the time the project report is filed.

The reason these cycles never close the gap is that provider data is not static. Addresses change. Network participation status changes. Taxonomy codes get updated. Affiliations shift. A provider relocates to a new practice. A credentialing record that was accurate at the last audit may be meaningfully wrong six weeks later.

A cleanup project that runs quarterly is essentially purchasing four months of stale data per year, with three brief windows of improved accuracy in between. That cadence cannot satisfy NSA 48-hour update requirements. It cannot support auto-adjudication at a standard that reduces the nationally documented $25.7 billion annual cost of claims adjudication failures, 70% of which are avoidable according to Premier’s 2025 analysis. And it cannot power AI initiatives that require data accurate enough to support automated decisions, as the AHA’s review of claims denial automation makes clear.

The periodic cleanup model doesn’t solve the provider data problem. It manages the visible surface of it while the structural gap persists underneath.

What “Done” Actually Looks Like

Done is not a clean roster file. Done is not a dashboard showing improved match rates. Done is not a credentialing system that is current as of last quarter’s audit.

Done is a continuously verified, unified provider identity that every downstream system (credentialing, claims, directories, contracting) consumes from a single, trusted source. Done is a platform that ingests any format automatically, resolves identity across sources using patent-pending consensus scoring, and propagates changes to downstream systems when something changes. Done is infrastructure that operates continuously, not a project with a start date, an end date, and a budget.

The CAQH 2025 Index has documented over $90 billion in annual administrative spending tied to routine transactions that remain manual or partially manual. The reason so much of that work hasn’t automated is not a shortage of automation tools. It is that manual verification is being used to compensate for data that can’t be trusted. That $21 billion remaining savings opportunity isn’t waiting on better technology. It is waiting on better data infrastructure, specifically the kind that produces reliable, continuously validated inputs that automation can act on with confidence.

Manual record validation currently costs payers an average of $10 to $12 per record. Automated management at scale, through a purpose-built infrastructure platform, brings that cost to $2 to $10 per record annually, with the additional benefit that the result is a continuously maintained identity rather than a point-in-time check that begins decaying the moment it’s completed.

The economics of infrastructure investment are not complicated. The question is whether the investment is framed correctly.

The Reframe That Changes the Decision

Every organization acknowledges that the provider data problem needs to be solved. The disagreement is rarely about whether to solve it. The disagreement is about how, and that disagreement almost always comes back to a category error: treating an infrastructure problem as if it were a project.

Projects have a beginning and an end. They have a scope. They produce a deliverable. And when the deliverable is a cleaner version of data that will begin drifting the moment the project closes, the organization has paid for something that doesn’t address what was actually broken.

Infrastructure is different. It runs. It maintains itself. It updates continuously. It feeds downstream systems with data they can trust, rather than data that was accurate as of a date in the past. Health plans don’t manage their claims system as a periodic project. They don’t run their credentialing platform as an annual cleanup exercise. They run them as infrastructure, because the operations that depend on them require continuity, not episodic accuracy.

Provider data is no different in this respect. The organizations that have moved from project-oriented approaches to infrastructure-oriented ones are the ones closing the gap between where their auto-adjudication rates are and where they should be, between their Stars ratings and their performance goals, and between the $21 billion automation opportunity and what their technology investments are actually delivering.

The question for every health plan and healthcare operator still managing provider data through vendor patchworks, internal build projects, or periodic cleanup cycles is a direct one: at what point does the cost of the current approach, measured in claims rework, FTE burden, regulatory exposure, and automation underperformance, exceed the cost of replacing it with infrastructure that works continuously?

For most organizations, that crossover has already happened. The math was established in The Hidden Tax article. The adjudication signal was established in Auto-Adjudication Is a Provider Data Problem in Disguise. The Stars consequence was established in The Stars Are Watching. The automation ceiling was established in Why the $21B Automation Opportunity.

This article closes the loop. The reason the problem persists isn’t capability. It isn’t budget. It is the frame. Projects end. Infrastructure runs.

Ready to Think Differently About Provider Data?

If your current approach isn’t producing the results your organization needs, it may be time for a different conversation. We can help you understand what provider data infrastructure looks like for your specific environment, workflows, and compliance obligations.

Request a Strategy Session No sales pitch. A working conversation about your current data posture and what’s standing between where you are and where you need to be.

We invite you to learn more through the third-party resources referenced here:

- Premier Inc. 2025 Claims Adjudication Survey: $25.7B in Costs, $18B Potentially Unnecessary

- CAQH 2025 Index: $258B Avoided, $21B Savings Opportunity Remaining

- Experian Health 2025 State of Claims: Denial Rates Still Rising

- AHA 2025: The Case for Automating Claim Denial Resolution (70% of Denials Overturned)